Scientists from Osaka University, Japan have discovered a way to use artificial intelligence (AI) to read our minds – quite literally.

By combining magnetic resonance imaging (MRI) with AI technology, the researchers were able to recreate visual images directly from human brain activity. What’s more, by using widely available Stable Diffusion technology, they were able to do so at a very low cost and with relatively little effort compared to other recent attempts to do this. The researchers’ findings are reported in a pre-print study.

Although researchers had already found ways to convert neural imaging into visual representations, complex and expensive deep-learning technology has generally been required. Technology which must be “trained” and carefully calibrated. Still, the representations produced are often sketchy, with poor fidelity.

The new method used by the Japanese researchers harnesses popular technology which has found widespread use in the generation of images using linguistic prompts. Over the last few months, social media platforms have been awash with images created using Stable Diffusion and other platforms like it. The technology is able to produce compelling, sometimes hyperrealistic images, with just a few carefully selected words. The technology can be used to produce static images or, with some tweaking, animations in popular styles such as anime.

While some in the art world have been supportive of this, many artists are fearful that it will replace them – and soon. Some have begun lobbying for this technology to be limited or even banned.

In September last year, the New York Times covered the fallout from that year’s Colorado State Art Fair competition, which was won by an entry – Théâtre D’opéra Spatial – created using Midjourney, another popular AI system.

“Art is dead, dude,” the winner, Jason M. Allen, told the Times.

AI: NOW READING MINDS.

To produce their images, the Japanese researchers followed a two-stage process. First, they decoded a visual image from the MRI signals of their test subjects. Then they used the MRI signals to “decode latent text representations” which could be fed into the Stable Diffusion platform, like prompts, to enhance the quality of the initial visual images retrieved.

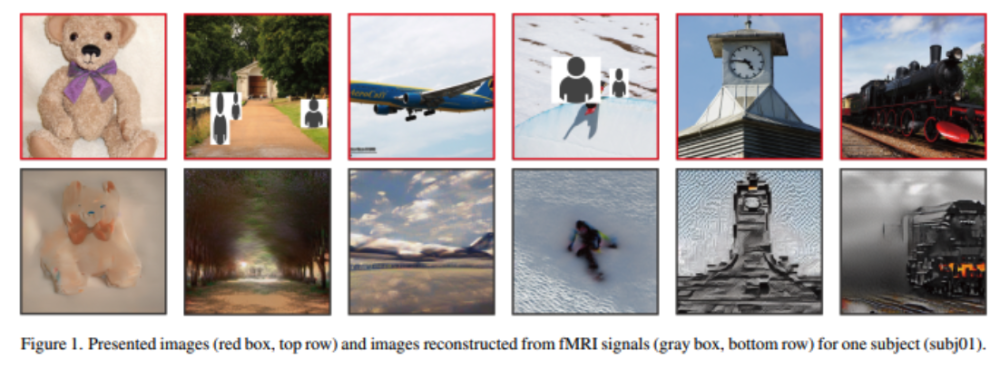

The results of this process can be seen in the series of images below.

A paper published in Nature last year used a very similar approach, albeit with more specialised diffusion technology, to reconstruct AI-generated faces from MRI data.

Although the reconstructions produced in the Nature study are clearly more faithful to the original representations, what is so remarkable about the new Japanese research is the fact that it was done using a platform that is already used by millions of people around the world to create images.

Stable Diffusion is a learning system – a so-called neural network – that trains itself using huge amounts of image data scraped directly from the vast repository of images on the internet.

Its creator, Emad Mostaque, has described it as a “generative search engine”. Unlike Google, which shows you images that already exist, Stable Diffusion can show you things that don’t.

“This is a Star Trek Holodeck moment,” according to Mostaque.

WHAT NEXT?

The disruptive power of AI has been on very prominent display over the last year. As a magazine editor, I for one can see the amazing potential for platforms like Stable Diffusion to provide high quality art at next-to-no cost or effort. If I want a picture of President Biden’s legendary showdown with Corn Pop, perhaps in the style of a 1980s action film, I simply have to ask and an AI platform like Midjourney will deliver. What’s not to like?

At the same time, it’s clear such technology has very real downsides. As noted, artists are sweating about losing commissions to AI.

Alongside AI-generated art, we’ve also heard a lot recently about Chat GPT-3, a technology which can write complicated essays, from scratch, in a matter of seconds, again with a simple prompt. The technology is already being used by university students to write high-quality essays that can fool their assessors. The entire university assessment system may have to be rethought.

You don’t have to be a fan of the Terminator or Matrix franchises, though, to see that the worrying potential uses of AI extend well beyond cheating university assessments or forcing mediocre artists out of business.

The website Popular Mechnanics recently reported, for instance, that US Air Force drones can now recognise individual people’s faces from high in the air, using AI. The firm that has created the technology, Seattle-based RealNetworks, says the drones can use the technology to distinguish friend from foe, and that the software can be applied for rescue missions, patrolling and “domestic search operations”. An Israeli firm is working on similar technology that will help drones find the perfect angle for facial recognition.

How long until we see drones equipped with AI making decisions that lead to human harm, even death? Will AI drones be allowed to make life-or-death decisions without human intervention at all? Will they be used in domestic policing and surveillance – in what roles, and with what powers? These are classic questions that have been posed by futurists, science-fiction writers and ethicists, but it’s now increasingly clear that they are not hypotheticals or thought experiments – we’re talking when, not if, and probably much sooner than you’d like to think.

In Dubai, drones are already being used in domestic policing to identify poor drivers. In the US police are using drones for a wide variety of purposes, from aiding in search and rescue and training officers, to collecting information on suspects and monitoring public events. Local authorities and rights groups are already pushing back against the use of facial-recognition technology. In 2021, Portland City Council adopted one of the strictest bans on the technology in the US, which might perhaps have had something to do with the city being an epicenter of disorder during the summer of “mostly peaceful” riots and beyond. The New York Civil Liberties Union published a report, also in 2021, warning of the widespread use of drones for surveillance in New York City and State.

The malign possibilities of AI-mind-reading technology far outstrip those of drones equipped with facial recognition software. We’re not talking about potential infringements of our social privacy – where we go and what we do – but penetration of our very minds – the MKUltra mad scientist’s wet dream. In fact, at this stage it’s hard to see what applications the technology could really have other than to sharpen the blunter techniques of sensory deprivation, isolation and scopolamine in getting people to divulge their innermost secrets.

However clumsy the tech may seem now, the captivating leaps we’ve seen in the past year with technologies like GPT-3 and Stable Diffusion should leave us in no doubt about the direction this is headed. The technology will become more powerful, more accurate, more practical, and that’s a guarantee.

Pretty soon we’re going to find out that our wildest dreams, and also our worst nightmares, could be more real than we would ever have dared to think. What’s even more terrifying is that others may be able to see them too, whether we want them to or not.