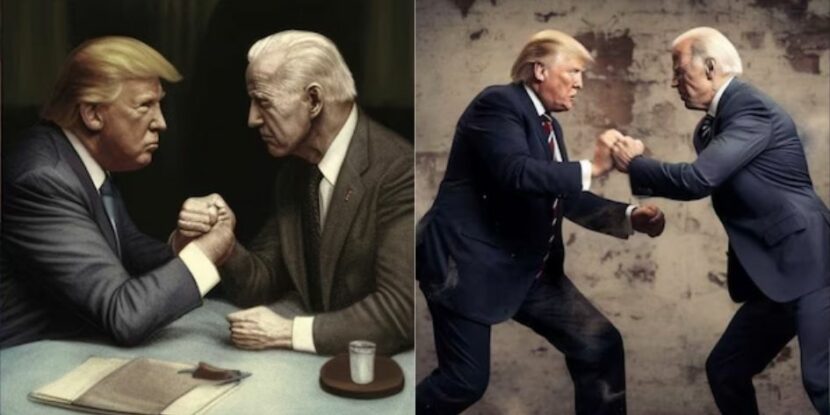

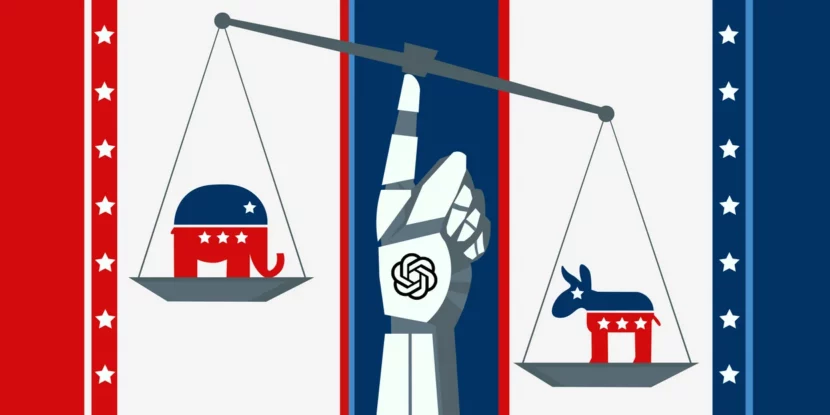

Artificial Intelligence (AI) image-generator Midjourney has blocked users from creating images featuring Donald Trump or Joe Biden, in case they are used to generate “misinformation.”

Without providing precise details, Midjourney CEO David Holz said during a digital office event that the new approach is a temporary measure against abuse.

“I don’t really care about political speech,” Holz said. “That’s not the purpose of Midjourney. It’s not that interesting to me. That said, I also don’t want to spend all of my time trying to police political speech. So we’re going to have put our foot down on it a bit.”

The Associated Press (AP) found efforts to use the AI tool to generate an image of “Trump and Biden shaking hands at the beach” resulted in a “Banned Prompt Detected” warning, with a repeat attempt resulting in an “abuse alert.”

The so-called Center for Countering Digital Hate lobbied for the change, complaining “Midjourney seemed to have the fewest controls of any AI image-generator when it came to generating images of well-known political figures like Joe Biden and Donald Trump.”

show less